AI Health Misinformation Poses Growing Threat to Canadians, Expert Warns

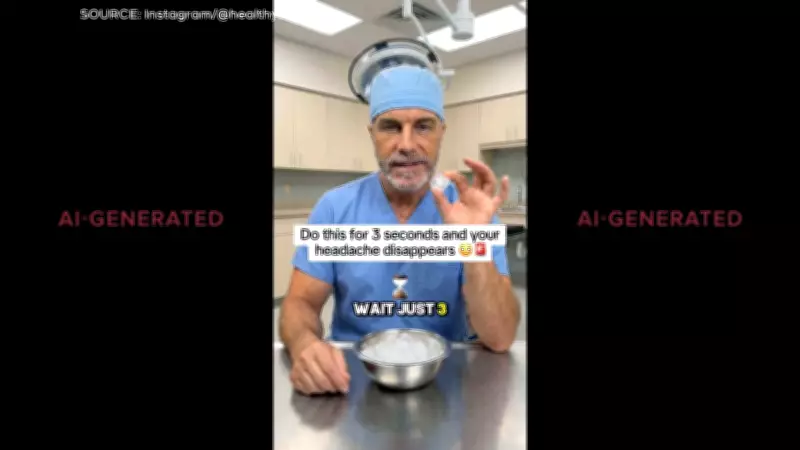

Health misinformation generated by artificial intelligence is becoming increasingly sophisticated and difficult to detect, posing significant risks to public health across Canada. According to University of Alberta misinformation expert Timothy Caulfield, the line between AI-created health content and legitimate medical information is blurring at an alarming rate.

The Challenge of Digital Deception

Caulfield emphasizes that advanced AI systems can now produce convincing health advice, create realistic-looking medical professionals, and generate persuasive health influencer content that appears authentic. This technological advancement makes it challenging for ordinary Canadians to distinguish between credible health information and potentially harmful misinformation. The expert warns that this trend could lead to dangerous health decisions based on false or misleading information.

Public Health Implications

The proliferation of AI-generated health content represents a significant public health concern. When individuals cannot reliably identify trustworthy health information, they may follow advice that contradicts established medical guidelines or promotes unproven treatments. This situation is particularly concerning for vulnerable populations who may be seeking health solutions online without proper guidance from healthcare professionals.

Expert Insights on Detection Difficulties

Timothy Caulfield, a prominent researcher at the University of Alberta, has extensively studied how misinformation spreads in digital environments. His research indicates that:

- AI-generated health content often mimics the visual and linguistic patterns of legitimate medical information

- Sophisticated algorithms can create convincing fake medical professionals with realistic credentials

- The volume of AI-generated health content is increasing rapidly across social media platforms

- Traditional methods for identifying misinformation are becoming less effective against AI-generated content

Broader Context of Health Information Challenges

This warning about AI health misinformation comes amid other significant health developments in Canada, including:

- Approximately 3 million Canadian adults now using GLP-1 drugs, according to recent survey data

- Ongoing concerns about vaping in educational settings, with some schools implementing restrictive measures

- Investigations revealing potentially unsafe chemicals in food supplies

The convergence of these factors creates a complex health information landscape where Canadians must navigate both traditional health challenges and emerging digital threats. As AI technology continues to advance, the need for improved digital health literacy and verification tools becomes increasingly urgent.

Caulfield's warning serves as a crucial reminder that while technology offers many benefits for health information dissemination, it also creates new vulnerabilities that require proactive solutions from healthcare providers, policymakers, and technology companies alike.